|

|

|

|

|

|

|

|

|

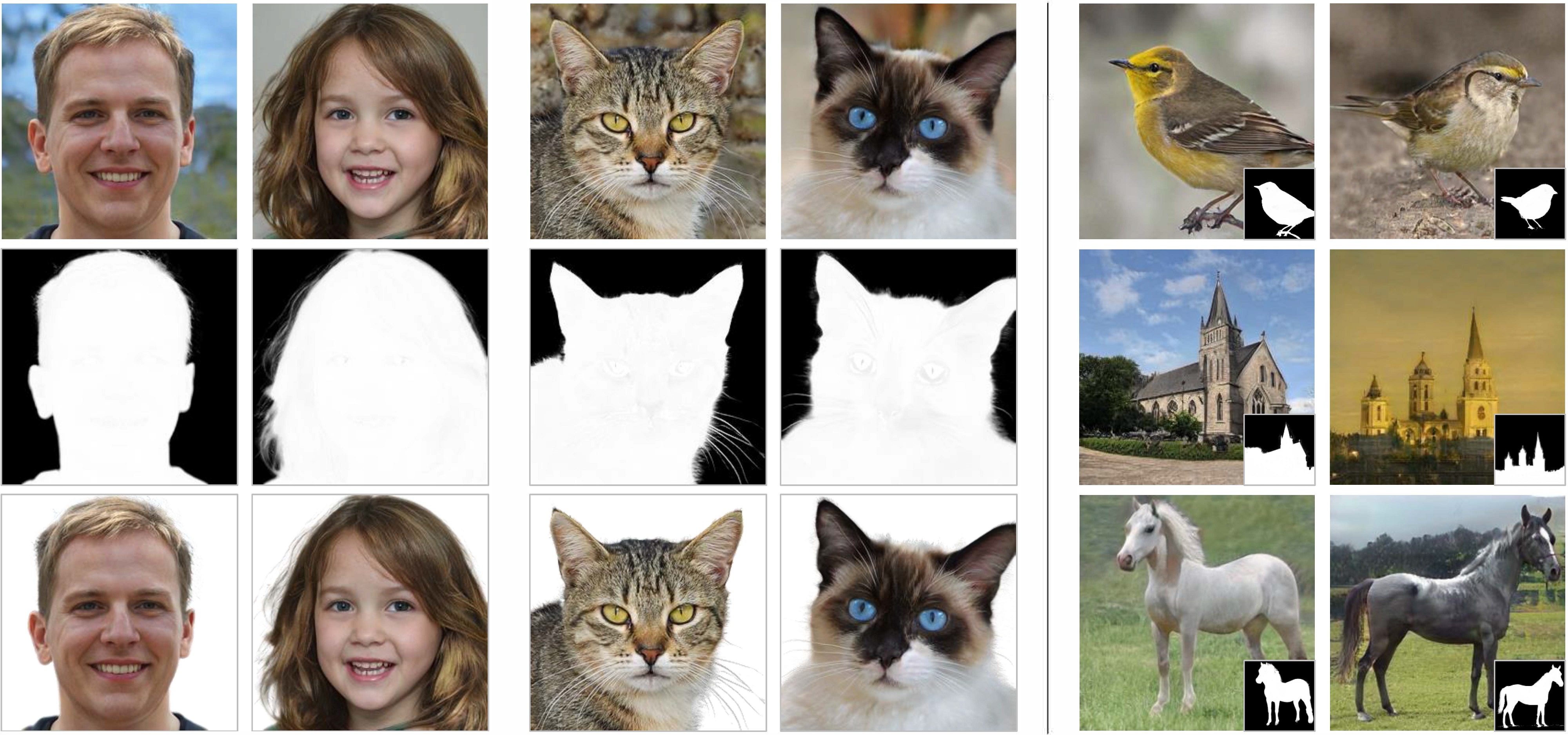

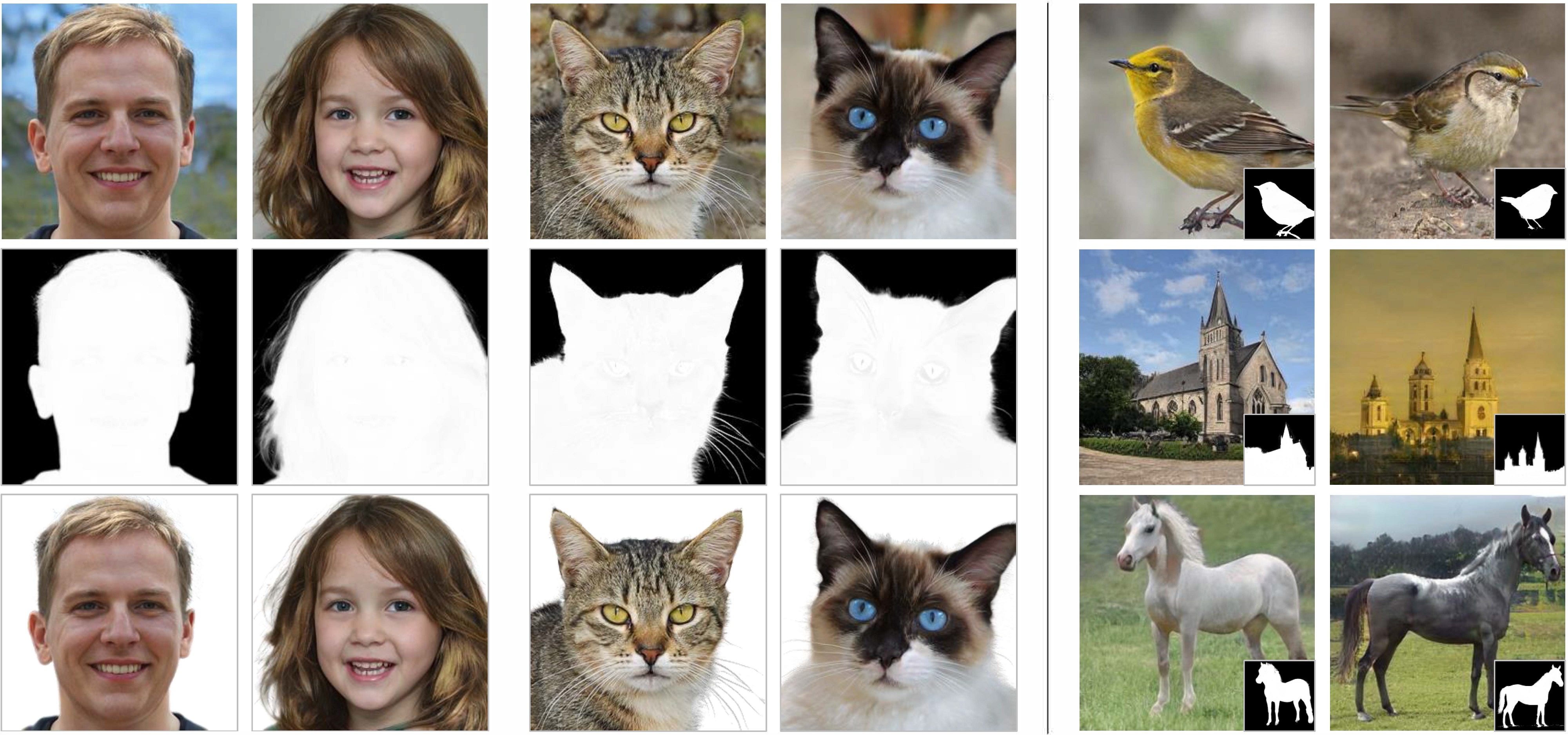

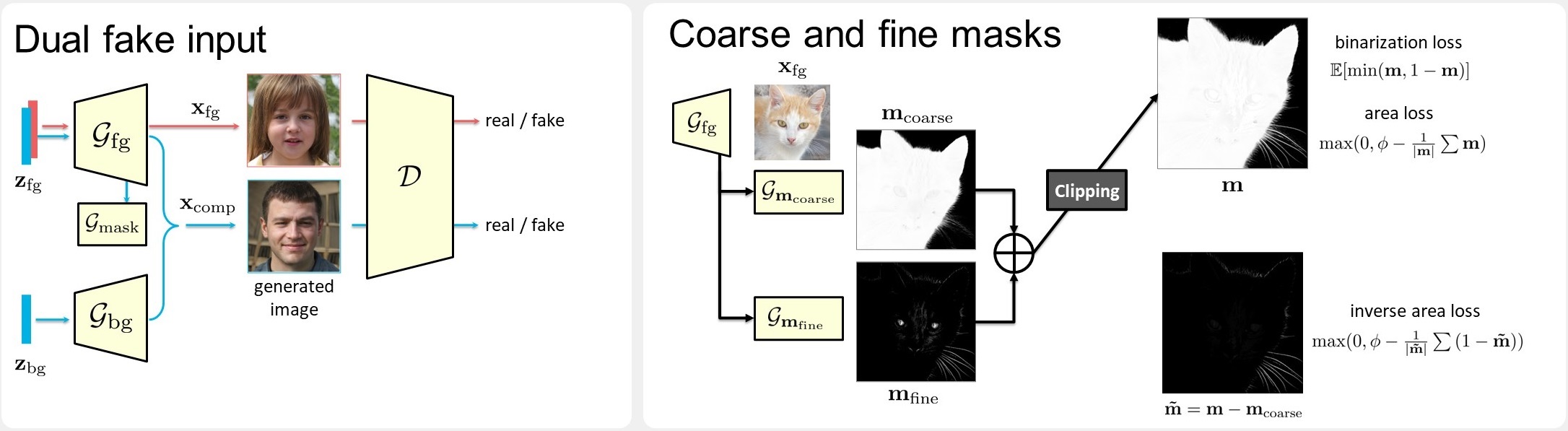

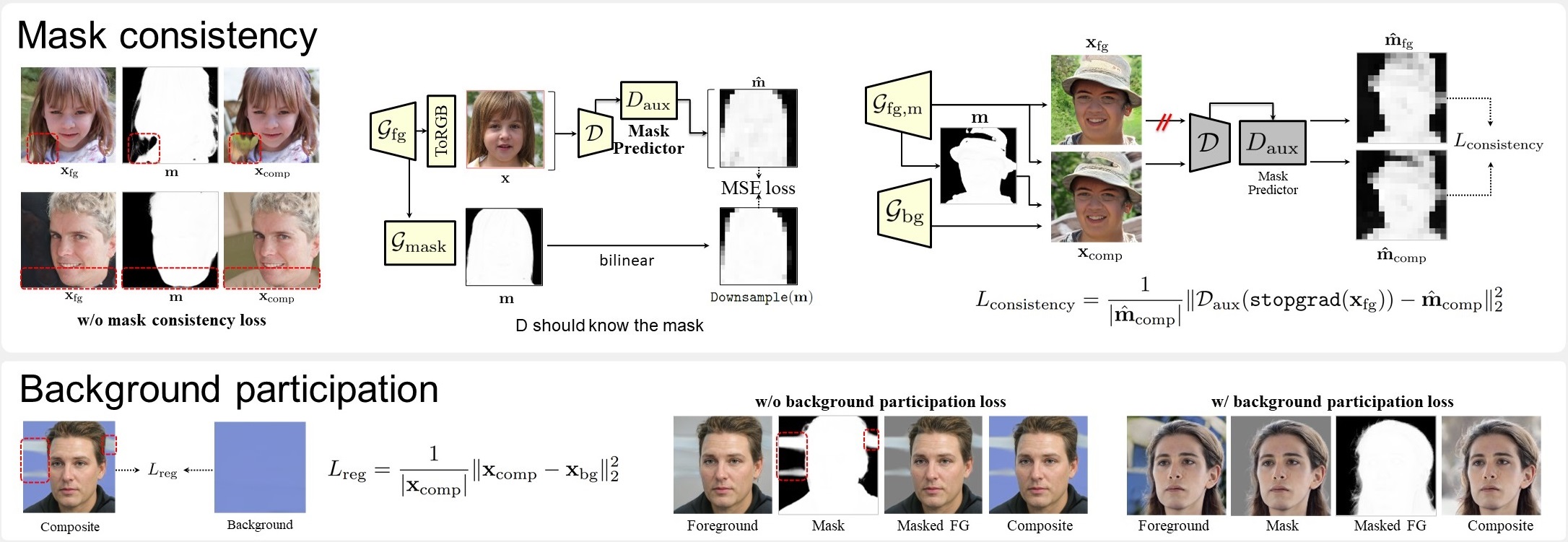

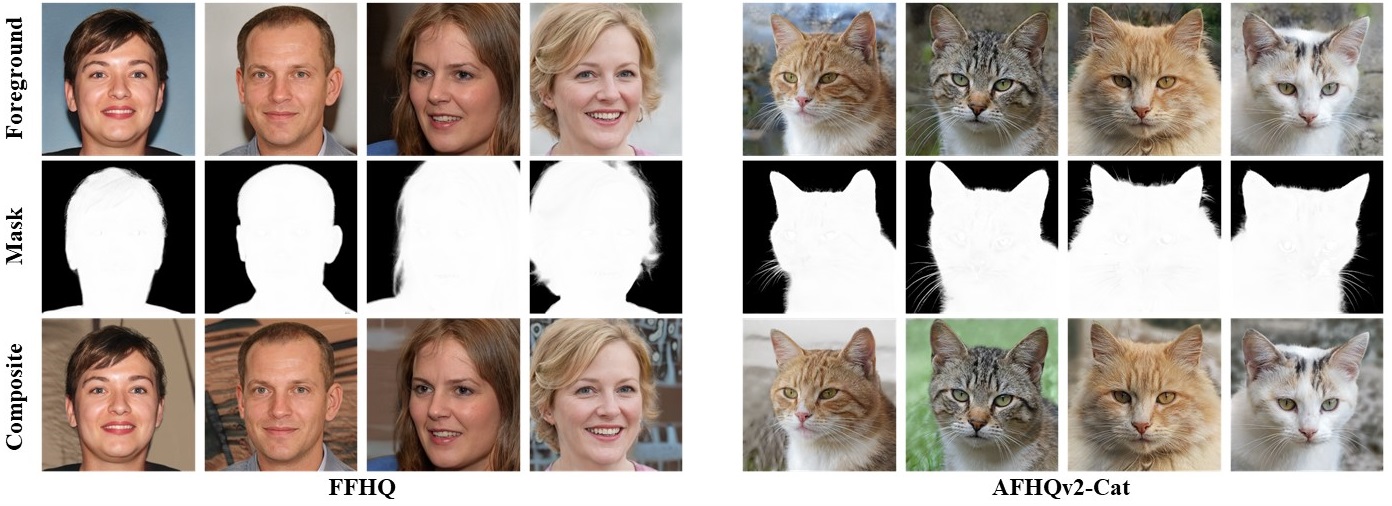

| Foreground-aware image synthesis aims to generate images as well as their foreground masks. A common approach is to formulate an image as an masked blending of a foreground image and a background image. It is a challenging problem because it is prone to reach the trivial solution where either image overwhelms the other, i.e., the masks become completely full or empty, and the foreground and background are not meaningfully separated. We present FurryGAN with three key components: 1) imposing both the foreground image and the composite image to be realistic, 2) designing a mask as a combination of coarse and fine masks, and 3) guiding the generator by an auxiliary mask predictor in the discriminator. Our method produces realistic images with remarkably detailed alpha masks which cover hair, fur, and whiskers in a fully unsupervised manner. |

FurryGAN has three generators for the foreground, background, and mask to produce composite images through alpha-blending. We ensure the foreground images to contain salient objects by using the dual fake input strategy. Our mask generator consists of a coarse mask network and a fine mask network for the fine details. |

Improper masks result in mask prediction inconsistency between the foreground and the composite image. We impose mask consistency using the mask predictor learned with generated images and masks. To prevent the excessive spread of alpha masks, we penalize the difference between the composite image and the background image. |

FurryGAN learns not only to generate realistic images, but also to synthesize alpha masks with fine details such as hair, fur, and whiskers in a fully unsupervised manner. Please check our paper for more results and visualizations. |

Acknowledgements |